High-quality demonstration data is the primary bottleneck in robot learning research. Teleoperation-based collection yields only 10–20 episodes per hour, requires skilled operators, and suffers from quality variance due to operator fatigue and proficiency differences.

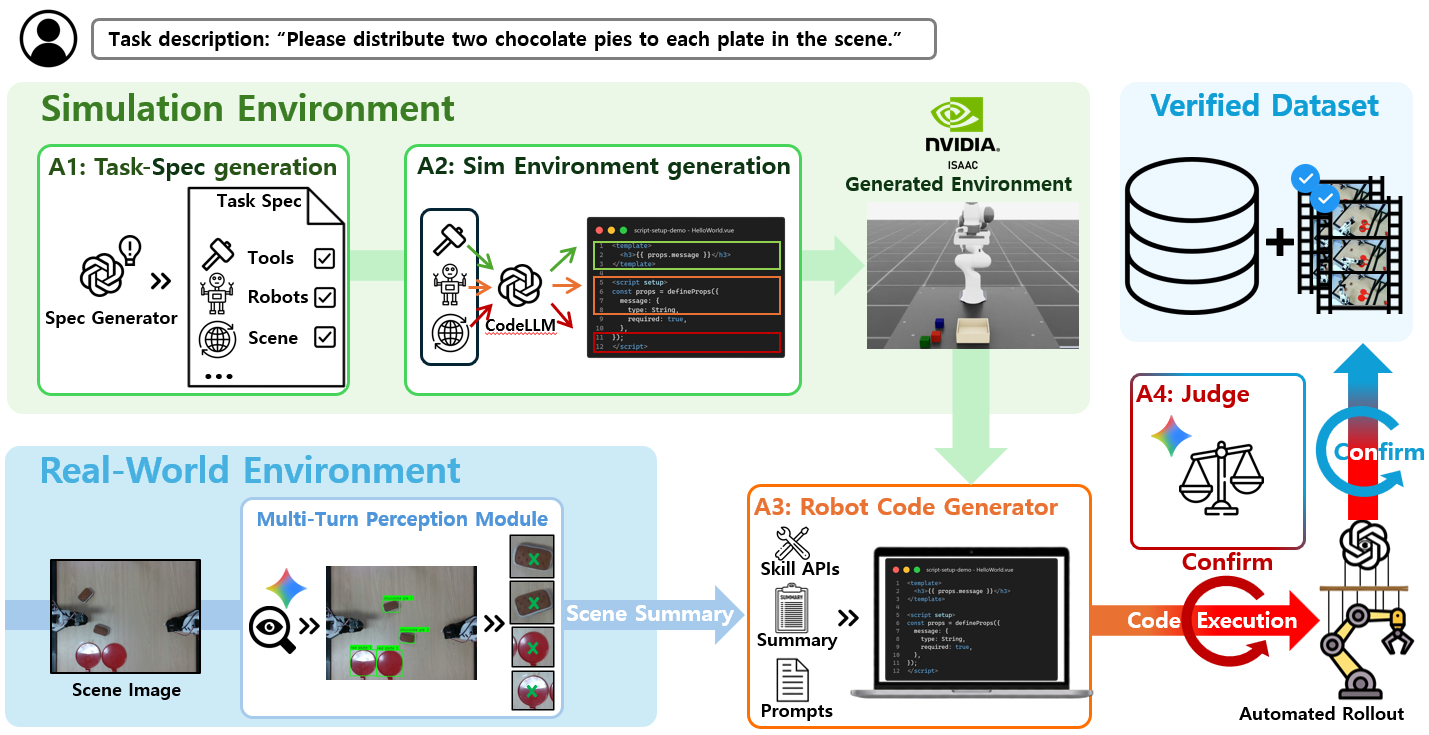

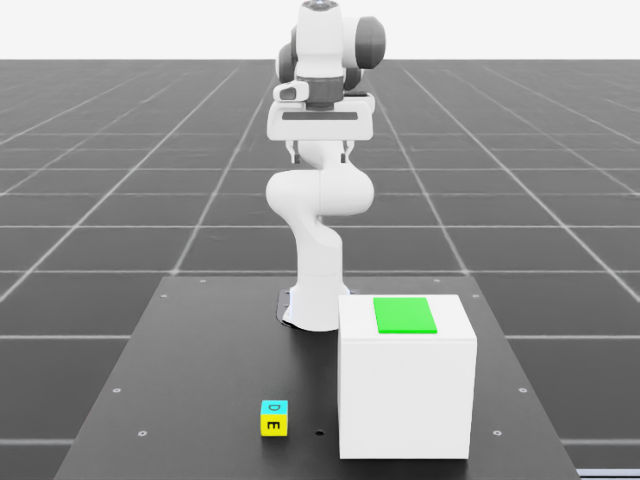

We present RAPIDS, a multi-agent system that fully automates the robot data collection and learning pipeline. From a single natural language instruction, RAPIDS autonomously converts the task into a structured specification (A-1), generates a simulation environment in IsaacLab (A-2), collects demonstration data via LLM-generated code in both simulation and the real world (A-3), validates quality through dual pre/post verification (A-4), executes on heterogeneous robots via a unified control framework (B-1), and fine-tunes VLA models with LoRA adapters (B-2).

RAPIDS produced 57 GB of simulation data across 13 tasks (78% episode success rate) and 10 GB of real-world data across 14 tasks on 3 robot platforms (SO-101 single-arm, SO-101 dual-arm, UR7e), reducing new-task pipeline time from 4–8 weeks to 17 minutes and per-episode cost from $10–50 (operator labor) to ~$0.1 (API cost).

RAPIDS end-to-end autonomous pipeline demo

Stacking

Tray Stacking

Multi Pick&Place

Can Pick&Place

Distribute to Plates

3-Block Stacking

Dual: Block Transfer

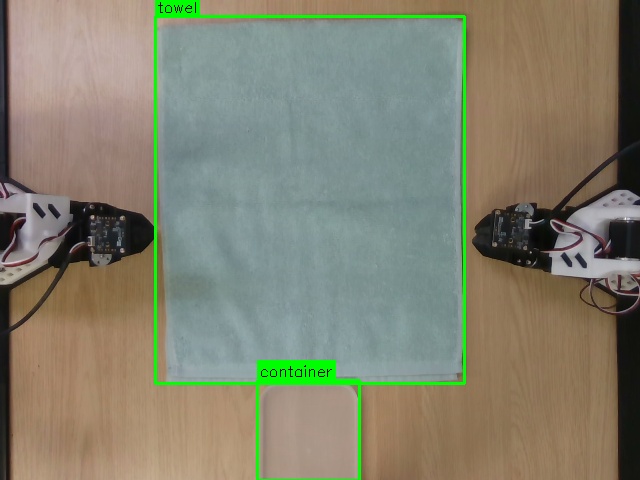

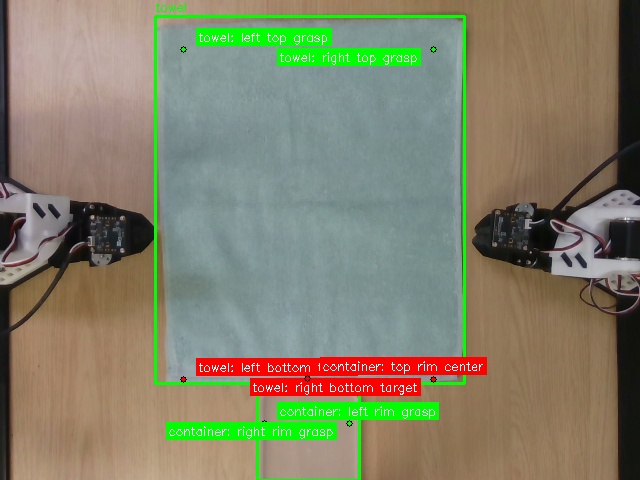

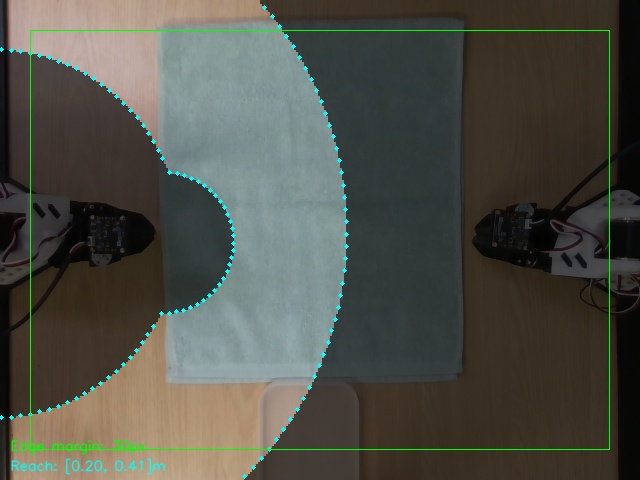

Dual: Towel Folding

Battery Assembly

Nut & Bolt Assembly

Precision Marker

Precision Placement

6 specialized AI agents, end-to-end from natural language to trained VLA model.

RAPIDS 6-Agent Pipeline: NL Input → Task Spec (A-1) → Sim/Real Environment (A-2, A-3) → Verification (A-4) → Verified Dataset

NL input → 6 agents → trained VLA model. No manual steps. 4–8 weeks → 17 min.

Auto self-refinement, 3-tier validation, AST + VLM dual verification, 10Hz real-time monitoring.

6-DOF IK, PhysX 5 physics, auto Domain Randomization, low-level safety limits.

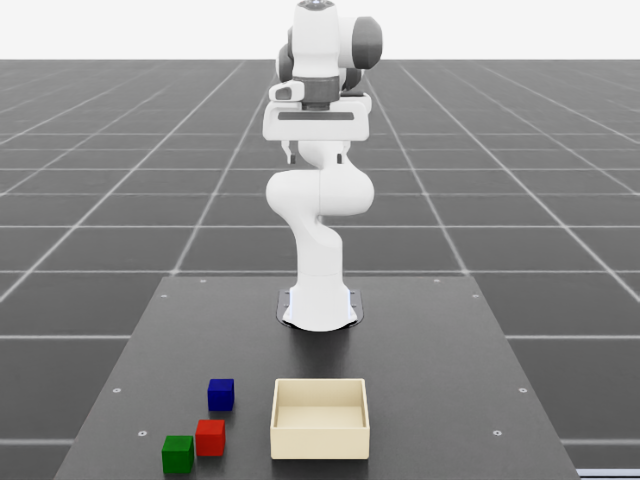

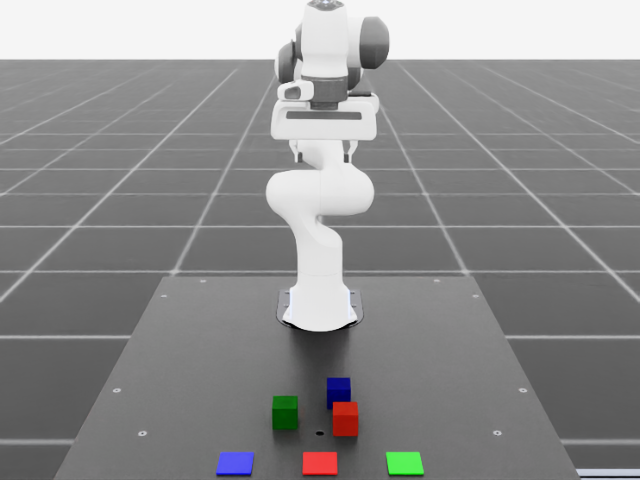

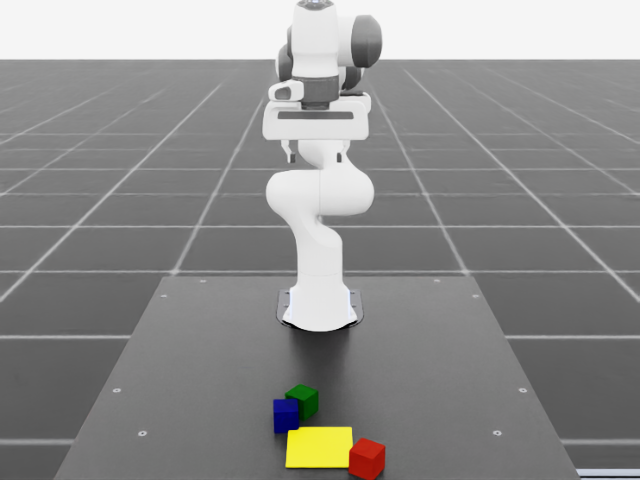

Stack in Tray

Color Sort

Multi Pick&Place

Drawer Pick&Place

Stacking

Tray Stacking

Multi Pick&Place

Can Pick&Place

Scene

Scene

Detect

Detect

Grasp Plan

Grasp Plan

Workspace

Workspace

Complete

Complete

Autonomous Forward-Reset data collection

| Metric | Conventional | RAPIDS |

|---|---|---|

| Task definition time | Days ~ weeks (manual reward/env code) | 1 min (NL → YAML) |

| Environment setup time | 1–2 weeks (manual sim authoring) | 9 min (LLM generation + self-refinement) |

| Data collection throughput | 10–20 episodes/hr (teleop) | ~8.5 episodes/hr (fully autonomous) |

| Full pipeline (new task) | 4–8 weeks | 17 minutes |

| Cost per episode | $10–50 (operator labor) | ~$0.1 (API cost only) |

| GPU Server | NVIDIA H100 80GB x 4 |

| GPU Workstation | RTX 5090 / RTX 3090 |

| SO-101 Arms | 5+1 DOF x 2 units |

| UR7e Arm | 6+1 DOF x 1 unit |

| Cameras | Intel RealSense D435 x 1 |

| 3D Printer | Bambu Lab H2 |

| OS | Ubuntu 22.04 LTS |

| Simulator | Isaac Sim 5.1.0 + IsaacLab v2.3.2 |

| Robot Framework | ROS2 Humble |

| CUDA / Python | 12.1 / 3.11 (Docker) |

| ML Framework | PyTorch 2.7.0 |

| Data Format | LeRobot v3.0 (OXE compatible) |

SO-101 robot parts 3D printing

CSI Agent Lab, Sungkyunkwan University

14 top-tier publications in embodied agents and reinforcement learning over the past 3 years

@misc{rapids2026,

author = {Yoo, Minjong and Kim, WooKyung and Kim, Soyoung and

Cho, Hyunsuk and Yoon, Sihyung and Ahn, SangHyun and An, Seungchan},

title = {RAPIDS: Robot AI Agents Pipeline for Intelligent Data Collection

and Skill Learning},

year = {2026},

note = {2026 AI Co-Scientist Challenge Korea, Track 2},

institution = {PRISM, CSI Agent Lab, Sungkyunkwan University}

}